A 2026 study by USAN found that 98% of contact centers have deployed AI in some form. A separate Intercom report found that only 10% have reached mature, production-scale deployment. The gap between those two numbers is not a technology problem. It is an implementation problem — and it is costing businesses billions in unrealized returns.

The AI adoption paradox is the defining challenge of customer service operations in 2026. Organizations are spending real money — enterprise generative AI software spend tripled in a single year, from $11.5 billion to $37 billion according to Menlo Ventures — but the outcomes are not matching the investment. Gartner found that 85% of customer service leaders are exploring or piloting conversational AI, and 91% are under executive pressure to implement it. Yet the same research firm warns that 50% of companies that cut customer service staff due to AI will need to rehire by 2027, because the implementations did not deliver the promised results.

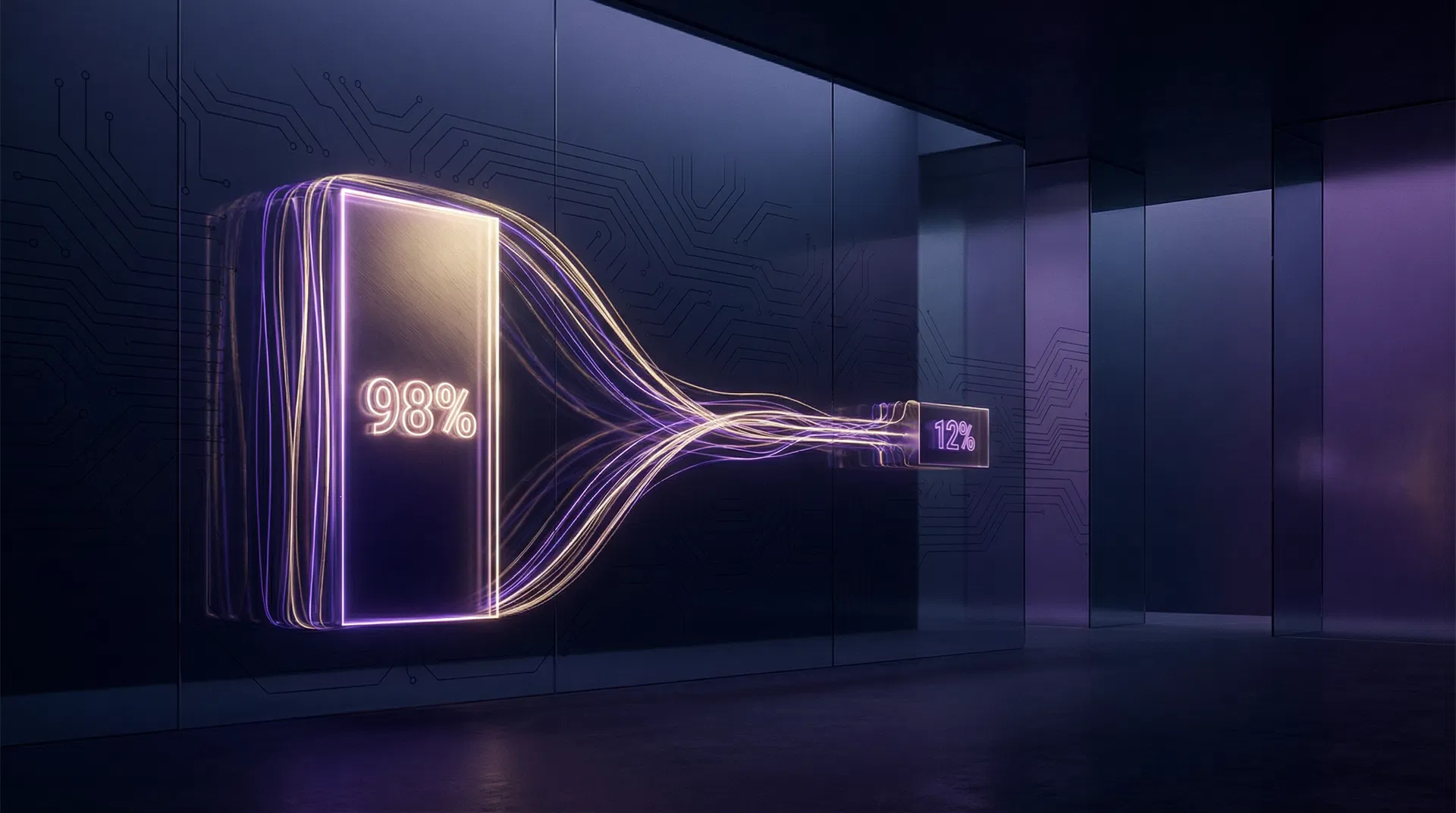

"98% of contact centers use AI. Only 12% have a fully optimized strategy. The gap between those two numbers is where most AI budgets go to die."

This article is about that gap. Not about which AI tools to buy — there is no shortage of vendor comparisons. This is about why well-funded, well-intentioned AI implementations consistently fail to deliver, what the organizations that do get results do differently, and how to audit your own implementation against those benchmarks.

The Numbers Behind the Gap

The adoption gap is not a matter of opinion — it is documented across multiple independent research sources, all pointing to the same conclusion. The table below captures the most important data points from 2025 and 2026 primary research.

| Metric | Value | Source | What It Means |

|---|---|---|---|

| Contact centers using AI in some form | 98% | USAN, 2026 | Near-universal adoption at the surface level |

| Contact centers with a fully optimized AI strategy | 12% | USAN, 2026 | The actual performance gap |

| Organizations with scaled AI agents in CS | ~10% | Intercom, 2026 | Confirms the USAN finding independently |

| CS leaders under executive pressure to implement AI | 91% | Gartner, 2025 | Explains why pilots launch before strategy exists |

| Organizations planning agentic AI within 2 years | 75% | Deloitte, 2026 | The gap is about to get more expensive |

| Companies that cut CS staff due to AI and will rehire by 2027 | 50% | Gartner forecast | The cost of premature headcount reduction |

| AI implementations that remain surface-level only | 37% | Deloitte, 2026 | Adoption without transformation |

The pattern is consistent: organizations adopt AI under pressure, deploy it at a surface level, measure the wrong outcomes, and then either declare success prematurely or quietly abandon the initiative. Neither outcome is acceptable when the cost of doing AI well is this well-documented.

The Cost of the Gap

Gartner benchmarks the median cost per contact at $1.84 for self-service versus $13.50 for agent-assisted interactions — a 7x difference. AI-native platforms operating at full optimization deliver resolutions in the $1 to $3 range. The math is straightforward: an organization handling 50,000 contacts per month that achieves even a 30% shift from agent-assisted to AI-resolved interactions is looking at $1.7 million in annual cost reduction at Gartner's median figures.

But that is the optimized scenario. For the 88% of organizations that have deployed AI without optimizing it, the cost picture looks very different. A poorly configured AI system that deflects tickets without resolving them does not save $11.66 per contact — it generates a follow-up contact at full agent cost, plus the customer frustration of having been sent through an automated dead end. The net result is higher cost and lower satisfaction than the pre-AI baseline.

| Scenario | Cost Per Contact | Annual Cost (50K contacts/mo) | vs. Fully Optimized AI |

|---|---|---|---|

| Fully agent-assisted (no AI) | $13.50 | $8.1M | Baseline |

| Surface-level AI (deflects, rarely resolves) | $10–12 | $6–7.2M | 15–25% savings |

| Optimized AI (30% full resolution) | ~$9.50 blended | $5.7M | 30% savings |

| AI-native platform (55–70% FCR) | $3–5 blended | $1.8–3M | 63–78% savings |

The difference between surface-level AI and optimized AI is not incremental. It is the difference between a 15% cost reduction and a 63–78% cost reduction on the same contact volume. For a mid-size operation, that gap is worth $3 to $5 million per year.

Five Reasons Most AI Implementations Fail to Deliver

1. Deflection Is Measured Instead of Resolution

The most common measurement mistake in AI customer service is tracking deflection rate — the percentage of contacts that do not reach a human agent — rather than resolution rate — the percentage of contacts where the customer's issue was actually solved. These are not the same metric, and optimizing for deflection without measuring resolution is how organizations end up with high deflection rates and deteriorating CSAT scores simultaneously. Gartner found that only 14% of issues fully resolve through traditional self-service channels. If your AI is deflecting 40% of contacts but resolving only 14% of them, you have not reduced your cost — you have generated a wave of frustrated repeat contacts.

2. The Implementation Starts With the Tool, Not the Data

AI customer service tools are only as good as the data they are trained on and connected to. An AI that cannot access real-time order status, return eligibility, account history, and product information cannot resolve the tickets that make up 60–80% of ecommerce support volume. Most failed implementations deploy the AI layer first and attempt to connect the data layer afterward — which is exactly backwards. The organizations that achieve 55–70% first contact resolution rates build the data infrastructure before they configure the AI, not after.

3. Change Management Is Treated as Optional

AI customer service implementations fail at the human layer as often as they fail at the technical layer. Agents who do not understand how the AI works, do not trust its outputs, or feel threatened by its presence will route around it — escalating tickets that the AI could have resolved, overriding its suggestions, and creating the perception that the tool is not working when the real problem is adoption. Effective change management — training, transparent communication about the AI's role, and clear escalation protocols — is not a nice-to-have. It is a prerequisite for achieving the deflection and resolution rates that make the ROI case work.

4. The Scope Is Too Broad at Launch

The organizations that get AI right start narrow. They identify the two or three ticket types with the highest volume, the most predictable resolution paths, and the clearest data availability — and they automate those first. They measure for 60 days, optimize, and then expand. The organizations that fail typically try to automate everything at once, achieve mediocre performance across all ticket types, and cannot identify which part of the implementation to fix. A 70% resolution rate on WISMO tickets is a better foundation for expansion than a 25% resolution rate across all ticket types.

5. Success Is Declared Too Early

AI implementations often show strong early metrics that erode over time. The initial deflection rate reflects the easiest tickets — the ones the AI was explicitly trained on. As ticket complexity increases, as product lines change, as customer behavior shifts, an AI that is not continuously monitored and updated will degrade. The organizations that sustain results treat AI implementation as an ongoing operational discipline, not a one-time project. They have someone responsible for monitoring resolution rates, CSAT on AI-handled tickets, and escalation patterns — and they act on that data monthly, not annually.

What the 12% Do Differently

The research on high-performing AI implementations is consistent across sources. The organizations that achieve production-scale results share four operational characteristics that distinguish them from the 88% that do not.

They measure resolution, not deflection. Every high-performing implementation tracks first contact resolution rate, CSAT on AI-handled tickets, and escalation rate as primary KPIs. Deflection rate is a secondary metric — useful for capacity planning, not for evaluating whether the AI is actually working.

They build the data layer before the AI layer. Real-time access to order management systems, returns platforms, account history, and product databases is a prerequisite, not an afterthought. The AI is only as capable as the data it can access.

They start with a narrow scope and expand deliberately. The first deployment covers two or three ticket types. Performance is measured for 60 days before expansion. This approach produces compounding returns: each successful expansion builds on a proven foundation rather than adding complexity to an unstable base.

They treat optimization as an ongoing function, not a project. Someone owns the AI's performance metrics. That person reviews resolution rates, CSAT, and escalation patterns monthly and makes configuration adjustments in response. The AI is not set and forgotten — it is managed like any other operational system.

The Klarna Benchmark: What Best-in-Class Looks Like

Klarna's AI assistant is the most widely cited example of a production-scale AI customer service implementation, and the numbers are worth examining carefully. The system handles two-thirds of all customer service chats — equivalent to the work of 700 full-time agents. It resolves issues in an average of two minutes, compared to eleven minutes for human agents. Customer satisfaction scores on AI-handled interactions are on par with human-agent scores.

What made Klarna's implementation different was not the technology — the underlying models are available to any organization. It was the data infrastructure (Klarna's AI has real-time access to every relevant customer data point), the narrow initial scope (the system launched on a specific set of high-volume, well-defined ticket types), and the organizational commitment to ongoing optimization. Klarna did not deploy AI and declare victory. They built a system and then ran it as an operational discipline.

"Klarna's AI resolves issues in 2 minutes vs. 11 for human agents — with equivalent CSAT scores. The technology is available to any organization. The difference is implementation discipline."

How to Audit Your Current AI Implementation

If you have deployed AI and are not seeing the results the vendor projected, the following four questions will identify where the gap is. They are the same questions GoMagic.ai uses in every free AI audit.

| Audit Question | What a Healthy Answer Looks Like | What a Problem Answer Looks Like |

|---|---|---|

| What is your AI's first contact resolution rate (not deflection rate)? | 35–65% depending on ticket mix | Unknown, or conflated with deflection rate |

| What is CSAT on AI-handled tickets vs. human-handled tickets? | Within 5–10 points of human CSAT | Significantly lower, or not measured |

| What data sources does the AI have real-time access to? | OMS, returns platform, account history, product catalog | FAQ content only, or static knowledge base |

| Who owns the AI's performance metrics and how often are they reviewed? | Named owner, monthly review cadence | No named owner, or reviewed only when something breaks |

If any of those four questions produces a problem answer, you have identified the root cause of your implementation gap. The fix is not a new tool — it is a configuration, data, or process change that can be made within your existing infrastructure. The organizations that close the gap from 12% to production-scale results do not do it by buying more technology. They do it by operating the technology they already have with more discipline.

If you want to run this audit against your own operation — with your actual ticket data, your current tool stack, and a specific prioritized roadmap for closing the gap — that is exactly what GoMagic.ai's free AI audit is designed to produce. The audit takes 30 minutes and delivers a clear picture of where your implementation stands and what it would take to move from the 88% to the 12%.